|

12/3/2023 0 Comments Apache iceberg example

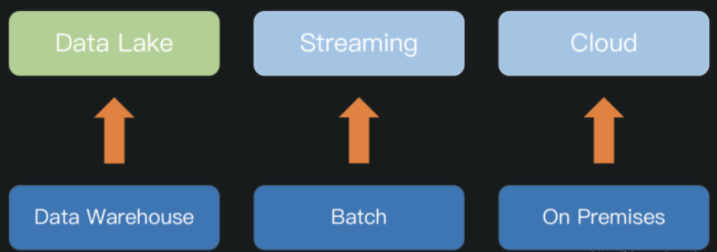

Kafka Connect Apache Iceberg sinkĪt GetInData we have created an Apache Iceberg sink that can be deployed on a Kafka Connect instance. It's worth mentioning that Apache Iceberg can be used with any cloud provider or in-house solution that supports Apache Hive metastore and blob storage. Apache Iceberg provides mechanisms for read-write isolation and data compaction out of the box, to avoid small file problems. This technology can be used not only in batch processing but can also be a great tool to capture real-time data that comes from user activity, metrics, logs, from change data capture or other sources. You can read more about Apache Iceberg and how to work with it in a batch job environment in our blog post “Apache Spark with Apache Iceberg - a way to boost your data pipeline performance and safety“ written by Paweł Kociński. This example rewrites small manifests and groups data files by the first partition field.Apache Iceberg is an open table format for huge analytics datasets which can be used with commonly-used big data processing engines such as Apache Spark, Trino, PrestoDB, Flink and Hive. When a table’s write pattern doesn’t align with the query pattern, metadata can be rewritten to re-group data files into manifests using rewriteManifests or the rewriteManifests action (for parallel rewrites using Spark). For example, writing hourly-partitioned data as it arrives is aligned with time range query filters. Manifests in the metadata tree are automatically compacted in the order they are added, which makes queries faster when the write pattern aligns with read filters. The metadata tree functions as an index over a table’s data. Iceberg uses metadata in its manifest list and manifest files speed up query planning and to prune unnecessary data files. See the RewriteDataFiles Javadoc to see more configuration options. The files metadata table is useful for inspecting data file sizes and determining when to compact partitions. option ( "target-file-size-bytes", Long. This will combine small files into larger files to reduce metadata overhead and runtime file open cost. Iceberg can compact data files in parallel using Spark with the rewriteDataFiles action. More data files leads to more metadata stored in manifest files, and small data files causes an unnecessary amount of metadata and less efficient queries from file open costs. Iceberg tracks each data file in a table. And some tables can benefit from rewriting manifest files to make locating data for queries much faster.

For example, streaming queries may produce small data files that should be compacted into larger files. Some tables require additional maintenance. Please be sure the entries in your MetadataTables match those listed by the HadoopįileSystem API to avoid unintentional deletion. None of the old path urls used during creation will match those that appear in a current listing. For example, if you change authorities for an HDFS cluster, The path can change over time, but it still represents the same file. Iceberg uses the string representations of paths when determining which files need to be removed. To clean up these “orphan” files under a table location, use the deleteOrphanFiles action. In Spark and other distributed processing engines, task or job failures can leave files that are not referenced by table metadata, and in some cases normal snapshot expiration may not be able to determine a file is no longer needed and delete it. See table write properties for more details. Tracked metadata files would be deleted again when reaching -versions-max=20. Note that this will only delete metadata files that are tracked in the metadata log and will not delete orphaned metadata files.Įxample: With =false and -versions-max=10, one will have 10 tracked metadata files and 90 orphaned metadata files after 100 commits.Ĭonfiguring =true and -versions-max=20 will not automatically delete metadata files. Whether to delete old tracked metadata files after each table commit This will keep some metadata files (up to -versions-max) and will delete the oldest metadata file after each new one is created. To automatically clean metadata files, set =true in table properties.

Tables with frequent commits, like those written by streaming jobs, may need to regularly clean metadata files. Old metadata files are kept for history by default. Each change to a table produces a new metadata file to provide atomicity. Iceberg keeps track of table metadata using JSON files. Regularly expiring snapshots deletes unused data files. Data files are not deleted until they are no longer referenced by a snapshot that may be used for time travel or rollback.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed